More Context Is Not Better

The biggest thing I’ve learned building AI coding workflows: more context is not better.

I work in web3. ABIs that are thousands of lines. A Ponder database schema that would eat half the context window if I fed it in raw. My first instinct was to give the AI everything and let it figure out what matters.

That doesn’t work. The AI gets lost. Important instructions get ignored. It latches onto random details. Results get worse as context gets bigger.

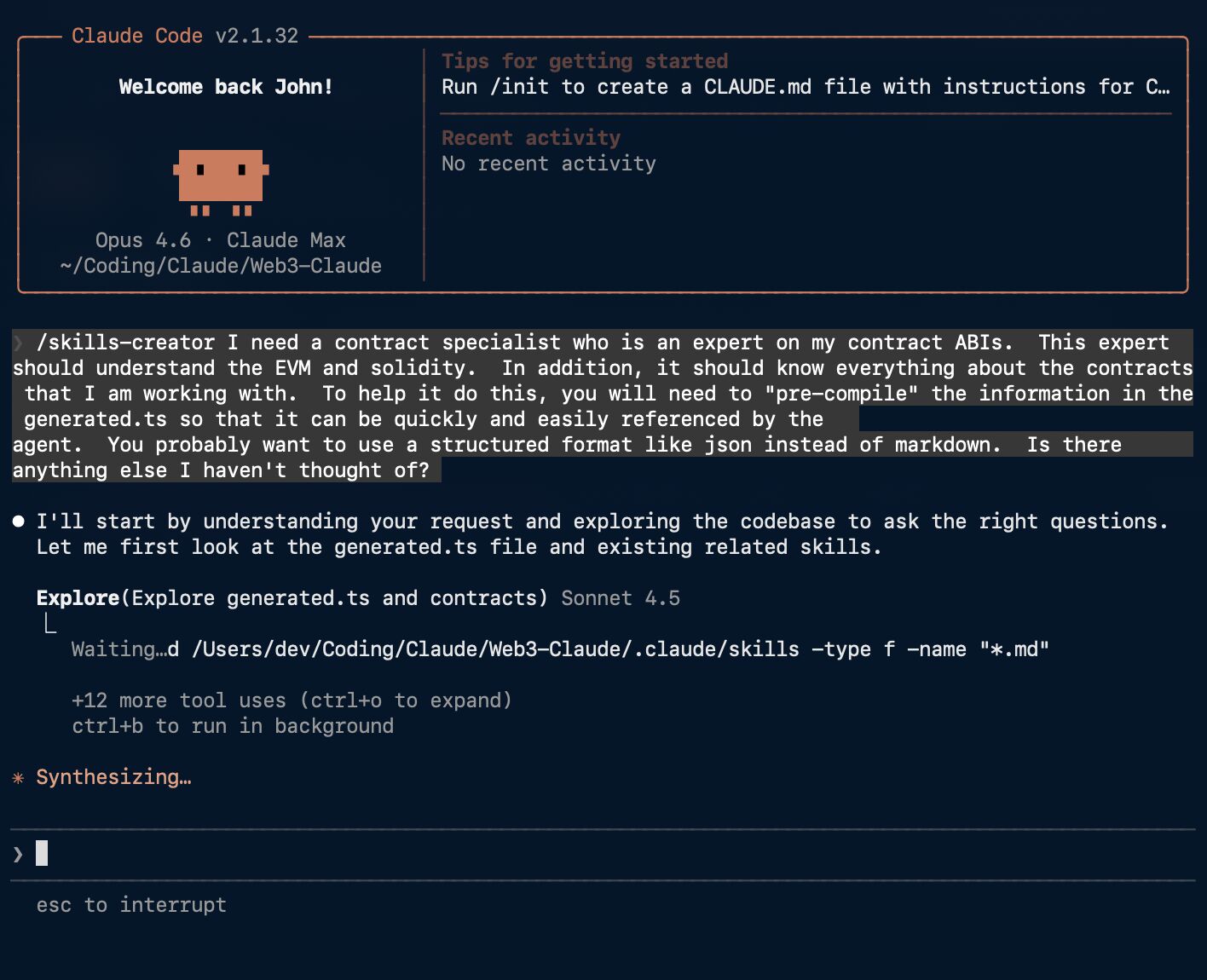

So I built a skills-specialist agent.

Its only job is precompiling context for other agents. It reads my raw ABIs, schemas, and docs, then generates slim reference files tailored to specific tasks. It builds skills.

When I need UI work done, I don’t hand my ui-designer the entire codebase. The skills-specialist has already built a component reference with just the props and patterns that agent needs. When I need contract integration, the web3-implementer gets only the relevant functions and events. The raw ABI stays on disk.

Agents building context for agents.

The mental model is memory hierarchy. Full codebase is disk. Precompiled skills are RAM. You don’t load everything into memory — you load what you need for the current task.

The skills-specialist is the piece that makes the whole system work. Without it, I’m back to stuffing context and hoping for the best.