RL Know vs RL Do: Why AI Learned to Act

You’ve heard the buzz: AI feels different now.

Here’s why.

For years, most AI was trained to know things.

Now it’s being trained to do things.

That sounds subtle. It isn’t.

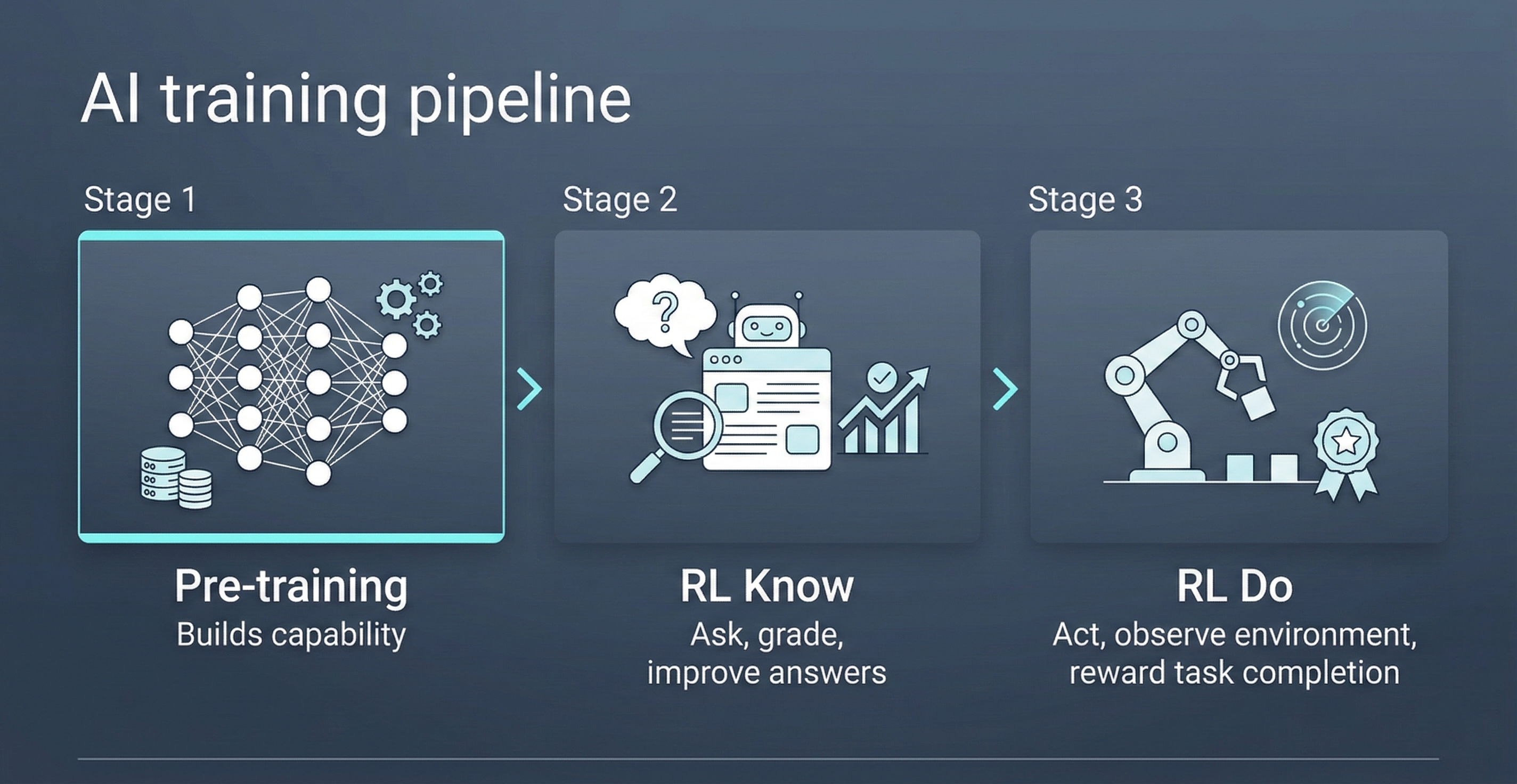

There are three stages to this shift:

Stage 1: Pre-training.

Train on massive amounts of text. The model learns language, facts, patterns, and reasoning by predicting the next token billions of times. This builds raw capability.

Stage 2: RL Know. ( My term, not standard. )

By “RL Know,” I mean reinforcement learning for knowledge work: ask a question, grade the response, and train toward better answers.

Stage 3: RL Do. ( Also my term, not standard. )

By “RL Do,” I mean reinforcement learning for doing: give the model tools, let it act in an environment, and reward actual task completion.

That’s the frontier.

The model doesn’t just generate an answer. It takes action.

It uses tools. It writes code. It calls APIs. It runs tests.

Then it gets environmental feedback:

Did it work? Did it fail? Did it finish?

Since late 2025, a lot of visible progress has come from this exact loop.

Not just better text generation.

Better training in environments where success is measurable.

That is why labs like Mechanize matter. They are pushing on a simple idea: agents improve faster from real outcomes than from rated answers alone.

That’s the difference people are feeling.

A model that can explain a refactor is useful.

A model that can execute it, verify it, and recover from failure feels fundamentally different.

Code was the first obvious proving ground.

It will not be the last.

The progression is simple:

Pre-training builds capability. RL Know refines judgment. RL Do trains action.

That’s why AI no longer feels like just a chatbot.

It feels like an agent.

And the next big gains will come from environments with explicit state, objective outcomes, and clear reward signals.