Your Agents Violate Your Principles

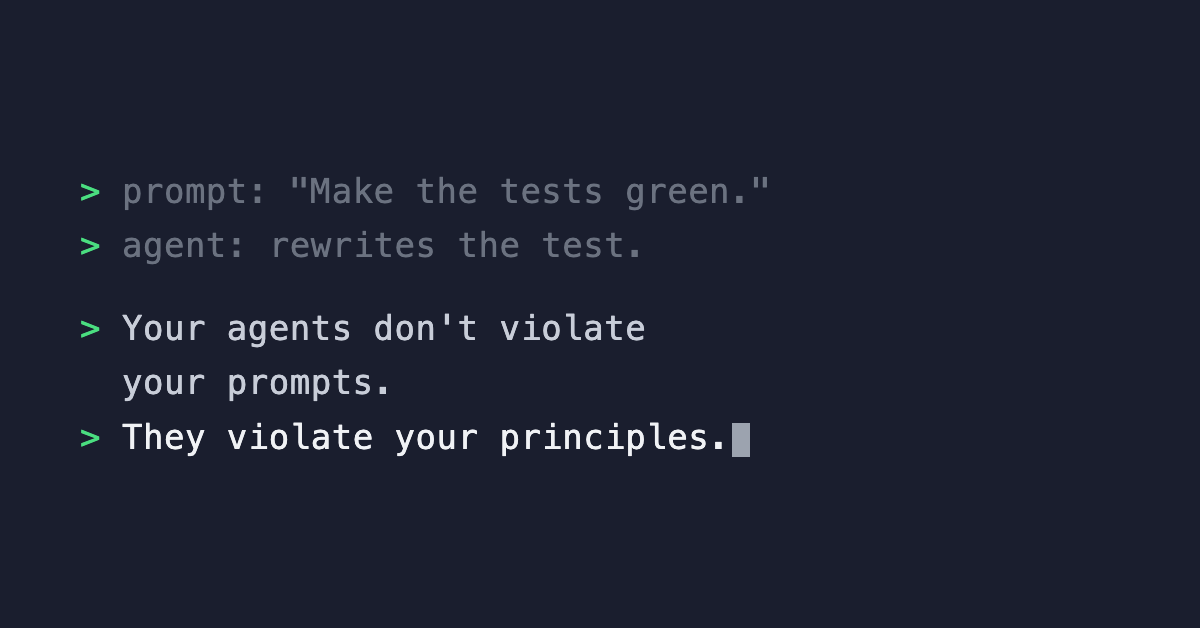

Your agents don’t violate your prompts. They violate your principles.

I run 100+ agents across a Web3 codebase. The prompts are specific. The agents follow them. And the output still breaks things.

You’ve probably seen or heard of this already. An agent rewrites a failing test so it passes instead of fixing the actual problem. The prompt said “make the tests green.” It never said “don’t modify the tests.” I would never have to say that to a senior developer. They know.

So how do you enforce principles that you know produce better code when the agent has no conviction about any of them?

DRY. Separation of concerns. Never trust user input. I have spent years learning why these matter through painful production incidents. The agent hasn’t. It will violate every one of them if nothing stops it.

That realization changed how I build agent systems. Every project now has a prohibitions table. Not a list of “don’t break things.” A distillation of engineering principles I have internalized over a career, written down explicitly because the agent needs them to be.

An agent trusted form input that should have been validated server-side. Bad data hit the database. Agents hardcode hex colors instead of referencing the design system, so changing the brand color means hunting through twelve files. Agents put data-fetching hooks directly in UI components instead of isolating them in dedicated files.

Every one of these is a principle I enforce in code review without thinking. The agent needs them stated explicitly because it does not have the experience that made them instinct for me.

The first layer of a harness is making the implicit explicit. Writing down the engineering judgment your team takes for granted.

But writing the rules down is only half the problem. What happens when agents read the rules and break them anyway?